Vibe coding - 6 words to know

I’ve been playing around with vibe-coding a lot last year. The promise is incredible, everyone can build amazing software - but the reality is a bit more technical.

In this post, I share some of the terms I picked up along the way, and explain why I think they’re important.

Contents

Determinism

Computers are essentially sophisticated calculators, you feed in the sum and you get the result. And just like 1+1 always equals 2, if you feed in the same instructions you will always get the same result. If you’ve grown up with classic software, this is likely something you take for granted.

Large-language models (LLMs) work differently, they are non-deterministic, they don’t compute answers but predict the next most likely option.

Example: 1+1

A standard (deterministic) computer executes specific rules, in this case arithmetic:

- The symbols ‘1’, ‘+’, ‘1’ are parsed according to grammar

- The operation ‘+’ maps to the instruction ‘addition’

- The instruction looks up or computes the result in a number system.

- The output ‘2’ is returned

There is exactly one output (2). And given the same input, on any machine, at any time - the output would be exactly the same, anything else is a bug.

LLMs do not execute arithmetic, they pattern match to predict the most likely output:

- The LLM looks for patterns that, based on its training, match ‘1+1 = ‘

- It most likely recognises ‘1 + 1 = 2’ as a familiar pattern, and most likely estimates this as the most plausible continuation.

- It most likely returns the continuation as 2.

The answer is not a calculated result, but an assumed outcome. The outcome is probabilistic, and repeating the same prompt can yield different outcomes.

So what?

Determinism runs deep, all of us learnt maths by counting out 1 + 1 on our fingers. We take it for granted that solutions are calculated, and software is predictable and consistent.

Most of the time, the solution produced by a deterministic system looks exactly the same as one produced by a non-deterministic system. Except sometimes it doesn’t, and this has huge implications.

Hallucinations

An AI hallucination describes when an AI model produces a confident-sounding, but factually incorrect outcome.

We like to anthropomorphize things so often describe the AI as ‘making things up’, ‘imaging things’, or even ‘lying’. But this doesn’t really represent what’s going on.

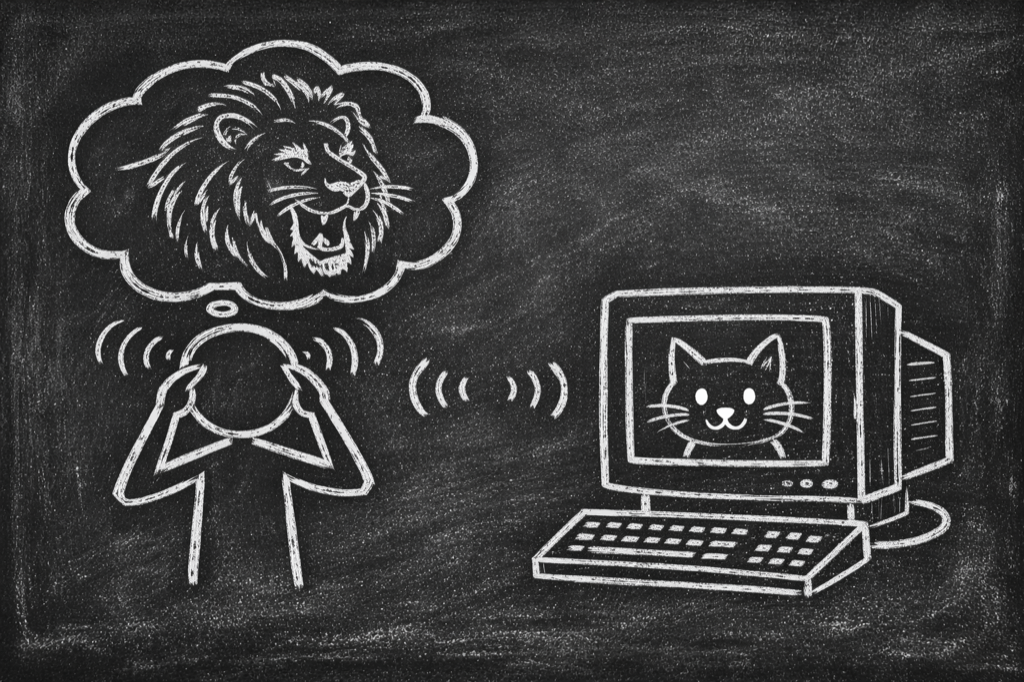

An LLM has no concept of the truth, and no innate reasoning capability (intelligence?). It predicts the most likely outcome based on it’s training data, but has no understanding of the outcome itself.

It presents mistakes with confidence, because it does not know it is a mistake, it does not know what a mistake is. In fact it doesn’t know anything, it only predicts.

Feature not a bug

Hallucinations are a feature of how LLMs work. It is a feature not a bug. And in spite of this glaringly obvious design flaw, LLMs are so game-changing, so incredibly important and powerful - we need to learn to live with it.

And this is tricky. We all have experience correcting an LLM with something you know is wrong, but what happens when you’re working with an LLM in a new domain? How do you tell what is true and what isn’t?

Image adapted from ‘This is fine’ meme. Original credit KC Green

Tests

When using an LLM to write code, hallucinations are typically expressed as a complete disconnect from reality. The LLM has performed some work, and confidently explains that everything is complete and working perfectly - but in reality everything is on fire and nothing executes. Asking the LLM to check, or repeat itself won’t help, because the LLM has no concept of what is correct.

Tests are a coping mechanism for working with non-deterministic software. It is a way of tying what was produced back to reality.

When using an LLM to write code - the product is the code and the code (hopefully) does something to produce an output. So here you can use a standard test-driven development approach, creating a test to check that the output matches what was expected. Now the LLM will keep trying, until the output meets the test criteria.

You can now confirm that the correct output was produced, but you can’t say how it was produced; the LLM could have taken any path to get there, sensible or otherwise. You now need to create ever increasing checkpoints at intermediary steps to ensure the code follows the expected path. And depending on the extent of the coverage, you can now use a non-deterministic LLM to produce deterministic code and consistent outputs.

Note: this situation gets weirder when the LLM output is the product (ie. agentic work), but that’s for another time.

Context

We know from experience that having a good contextual brief for an assignment is a good thing. But what's the right amount of information a brief should contain?

- Too little and you're trying to complete a task without knowing the full facts. You can easily misunderstand what was expected, and take a wrong-turn.

- Too much and you can be drowning in documentation. The relevant information gets buried in noise.

- Too detailed, well-defined and complete, and there is no work left to be done.

Now imagine you can read the brief only once, and have to memorise it. Human short-term memory can hold around four chunks of information at one time, LLMs are similar, they need a good brief to perform the expected task, and can only hold so many things in their context-window at once.

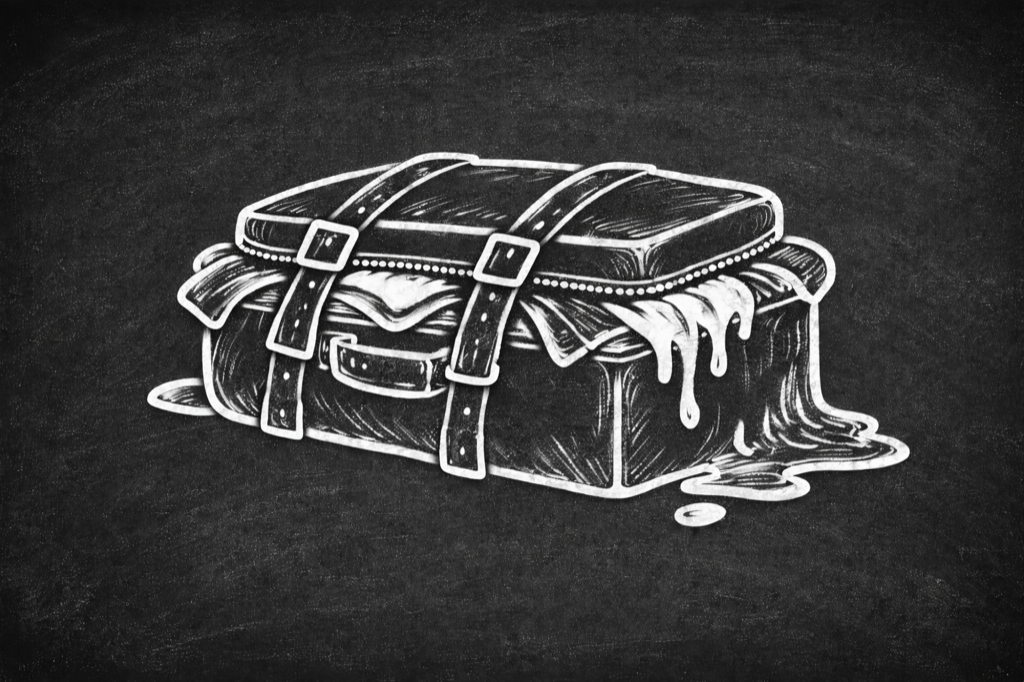

In practice, everything starts out great. You’re shipping code and everything is performing as expected. But as your software grows, you’ll inevitably hit the context-window limit. The LLM starts dropping the ball, bugs creep in & things get weirder.

We can compensate for this by borrowing classic software-patterns, by architecting your solution to compartmentalise its functions. Consider how the Bezos API mandate, enabled scaling by reducing the dependencies, complexity, and context that each team needed to maintain and control.

But how much context can each function maintain? That depends on tokens.

Tokens

Tokens are the basic units of text that LLM models break down, process and generate.

When you send a prompt to an LLM, it doesn’t read words like we do. Instead it breaks it down into manageable chunks of around 3-4 characters each. Once it has all of the tokens (the context), it generates the response (again at the token level), predicting the next most likely token based on everything that has come before.

The context window is the maximum number of tokens an LLM can hold and ‘reason from’ at once. This includes system instructions, conversation history, the last prompt, & all previously generated output.

The maximum number of tokens has increased with each generation of LLM (GPT-3 had 4K tokens, GPT-4 128K, & GPT-5 up to ~400K). And generally more tokens equals better, more nuanced, ‘reasoned’ results.

The cost

Every token processed requires computation, computation consumes energy, and energy costs money. The cost of goods is per token.

But pricing models obscure this. A million tokens sounds generous, but it's abstract - hard to benchmark, easy to burn through.

Token caps have become a growth lever. Start users on generous limits, let them get hooked, then throttle back. The paywall appears: upgrade to Pro. It’s classic playbook.

The same playbook is being applied to drive adoption (& job losses?) at a societal level. The big labs are subsidising adoption (eg Github copilot is reportedly subsidizing each user by $20 each month). What happens when the subsidies stop?

Vibe coding

Vibe coding is the aspirational product pitch of GenAI coding.

Technology, startup stardom & untold riches are no longer the reserve of the technology elite. Now anyone can create software! Just whisper what you want in easy general terms (vibes), and hey presto out pops a fully formed, working product that’s exactly as you imagined.

The reality

This is great when it works. But more often than not, the results are mediocre. A tangle of confident sounding code that doesn’t land. Or derivative work that sits in the middle.

This is not to say AI-assisted coding is not valuable - it is immensely. But until the scaffolding matures to handle more of the engineering discipline automatically, creating great software needs more than just vibes.